New deals for science!

On May 10-11th, the AKASHA Hub Barcelona hosted a “STEPS” workshop. “STEPS is a grassroots, non-profit series of events focusing on the exploration of blockchain-backed applications for science, technology, education, publishing, and society” co-organized by “Blockchain for Science” a think tank founded by Sönke Bartling. It is not the first time that the AKASHA team had been in contact with the “Blockchain for Science” crowd: Back in 2018 we hosted several projects (among them were: DEIP, Science Matters, TIB for a week-long gathering at our former Bucharest Hub.

Back to summer 2018: The AKASHA team hosts scientists and Blockchain for Science projects in Bucharest.

This time around our now fully-operational AKASHA Barcelona Hub served as the venue hosting several exciting projects dedicated to apply blockchain technologies for science and knowledge creation. We’ve included a number of pictures from the event in this post, so you can see what went on for yourself!

Crypto-Rennaisance @Akasha Hub Barcelona: Phoebe Tickell, Sönke Bartling, and Theo Beutel in front of a copy of "Future Cryptoeconomics" featuring Vitalik Buterin.

Why are we focussing on collaboration and communication in science?

Despite increasing innovation in the field of scientific communication (see for instance eLife) the majority of the scientific community still considers commercial scientific publishers as an inevitably-required third party that ensures “quality” and “reproducibility” in science. This is despite increasing evidence of the poor outcomes and strong biases that result from the reliance of the centuries-old publishing businesses on a format of delivering information that once had been defined by the printing press. Today, modern web technologies offer entirely new means for the scientific community to self-organize. Scientists could collaboratively create up-to-date information and reach consensus on specific topics in a much more rapid way. The online encyclopedia Wikipedia that has replaced its printed relatives almost entirely, and the fact that it has been demonstrated to fare similarly or even exceed those in quality and in being always up to date, and decentralized in its documentation just serves as the most prominent example of the possibility of there being a better way of doing things.

Pepo Ospina and Andrei Sambra discussing Hyperdata and uprtcl.io

It might come as a surprise, that it is only about 50 years since scientific journals adopted formal “peer-review” mechanisms.

Over this period, scientific publishers have begun to operate less with the primary goal to serve the scientific community in mind, through the dissemination of scientific knowledge, instead acting as gatekeepers, operating selectively, and extracting increasing profits from public institutions. Today metrics, such as impact factors and journal prestige dominate many scientific fields. Peer-review does not offer the quality control it claims, while publishers slow the dissemination of information massively. As MIT Media Lab Director, Joi Ito states: “We learn more and more about less and less”. With reproducibility rates dropping, and the continued accumulation of essentially wrong and misleading information upon which we often build our new hypotheses, we may have already entered the dark ages of science once more (I recommend Thomas Kuhn’s thesis on the Phlogiston theory in that context).

The teams we hosted in Barcelona focussed on different aspects of disrupting this industry and creating new, open, and interoperable ways of knowledge sharing on the distributed Web. Their efforts towards the creation of open, collaborative platforms for knowledge exchange, cooperation, scientific funding, and governance resonates well with the aims and values of the AKASHA Foundation, which aims to nurture projects that can unlock individuals' potential and collective intelligence.

Buzzing AKASHA Barcelona Hub, despite international and local strikes. The DAO Team Francesca, Theo & Kate in the front left on Friday afternoon.

Pepo Ospina and Guillem Cordoba from uprtcl.io presented _ptrcl and collective.one - a collaborative platform for content creation and sharing that permits participants to reuse and recombine content by any user and any platform and enable a governance over content created by multiple authors.

Notably, the same data can be observed through different perspectives that can assume different ”contexts.” Following Pepo, Andrei Sambra presented “Hyperdata," a protocol currently under development at the AKASHA Foundation. It provides a storage & network-agnostic data model and schema(s) for linking data across different networks and platforms. Through Hyperdata, a piece of data hosted on the Web could be linked to another one hosted on the InterPlanetary File System, which in turn may be linked to data hosted on any network, and even on your local file system (file://).

Both presentations resonated a lot with people in the audience, especially the link between Hyperdata and those working on the _Protocol became apparent. The reason is that we all share a common vision about storing data in a network-agnostic way. When it comes to the point where one needs to choose an underlying data model uprtcl.io were thinking of using their own JSON schema. However, during the discussion the group realized that it can all be stored in the payload of a Hyperdata document, while at the same time taking advantage of the signature specific to Hyperdata documents.

Adrià Massanet took us on an exciting journey into cryptography focussing on zkSNARKs. We learned about the challenges in the field - he introduced us through examples in two ZK-SNARK toolboxes on Ethereum: SnarkJs/Circom and Zokrates. The concept of the CODA blockchain Adrià mentioned is cool: the validity of the Blockchain is proofed through small zk-SNARKS - effectively solving the blockchain size problem - which might contribute to scalability as well. However, while CODA seems to run smoothly, Adrià reminded us that it will be hard to find knowledgeable people that could actually establish that the zksbarj algorithms are really doing what they are supposed to do...

Adrià presenting zkSNARKs principles.

Daniel Shavit from Pando Network outlined the challenges in the current creative industries and how the infrastructure of the Pando Network, which is creating DApps that operate on the underlying Pando protocol, a distributed version control system (VCS) based on IPFS, Aragon, and Ethereum could help.

A specific focus during our discussions was then on academic publishing and how it could be possible to create Decentralized Autonomous Organizations (DAOs) for science publishing on Pando. To this end Pando is proposing the Contributive Commons License. Following the presentations in the morning we were pleased to host Francesca Pick, and Kate Beecroft from DAOstack as well as Theo Beutel from DAOincubator to take us on a co-creative journey to explore three DAO concepts for science funding, publishing, and education respectively.

Dani presenting for Pando.

In the blockchain space, DAOs are one of the most discussed concepts but they are also among the least matured. According to Jack Laing, a DAO is an organization “without any central point of control” that is “resistant to interference” and makes use of “the collective input of its stakeholders.” Note that these are normative statements which do not specify how such outcomes may be reached. In a way, the term ‘DAO’ is an umbrella term for any (existing or future) solution that is capable of combining blockchains and their objective consensus with subjective consensus through human coordination, thereby providing an interface to the real world.

Such interfaces are highly anticipated and, once functional and mature, could be applied to a variety of use cases in science and research where a transition from centralized to a decentralization of power is deemed desirable. However, the devil really is in the details which is why the workshop used ‘DAO Kitchen’, a template by Felipe Duarte, to design a DAO bottom-up based on problem statements and goal setting.

Two DAO concepts enjoyed particularly great popularity: a publishing DAO, and a funding DAO. The former aims at disinter-mediating journals to remove high access barriers, inefficient processes, and opaque decision making. Such a DAO could connect to a public, tamper-proof, file-hosting service, such as IPFS, and crowdsource the curation process of papers into journals. The participants also proposed to combine liberal reusage with inherited attribution.

The concept of a funding DAO focused on reducing bureaucratic friction in science funding and providing additional incentives for useful research activities. During the workshop, this concept was narrowed down to a quite particular problem context: the replication crisis. A DAO that allocates funds only for replication studies could dynamically adjust to the demand for replication and the supply of reputable researchers and thereby significantly improve science compared to today where replication is rarely as appealing to scholars as novel research.

Despite limited time, the workshop resulted in multiple, remarkably-elaborate DAO concepts which are now taken onwards by the teams and may soon even become reality as part of an experimental trial.

Our DAO experts Francesca, Kate and Theo.

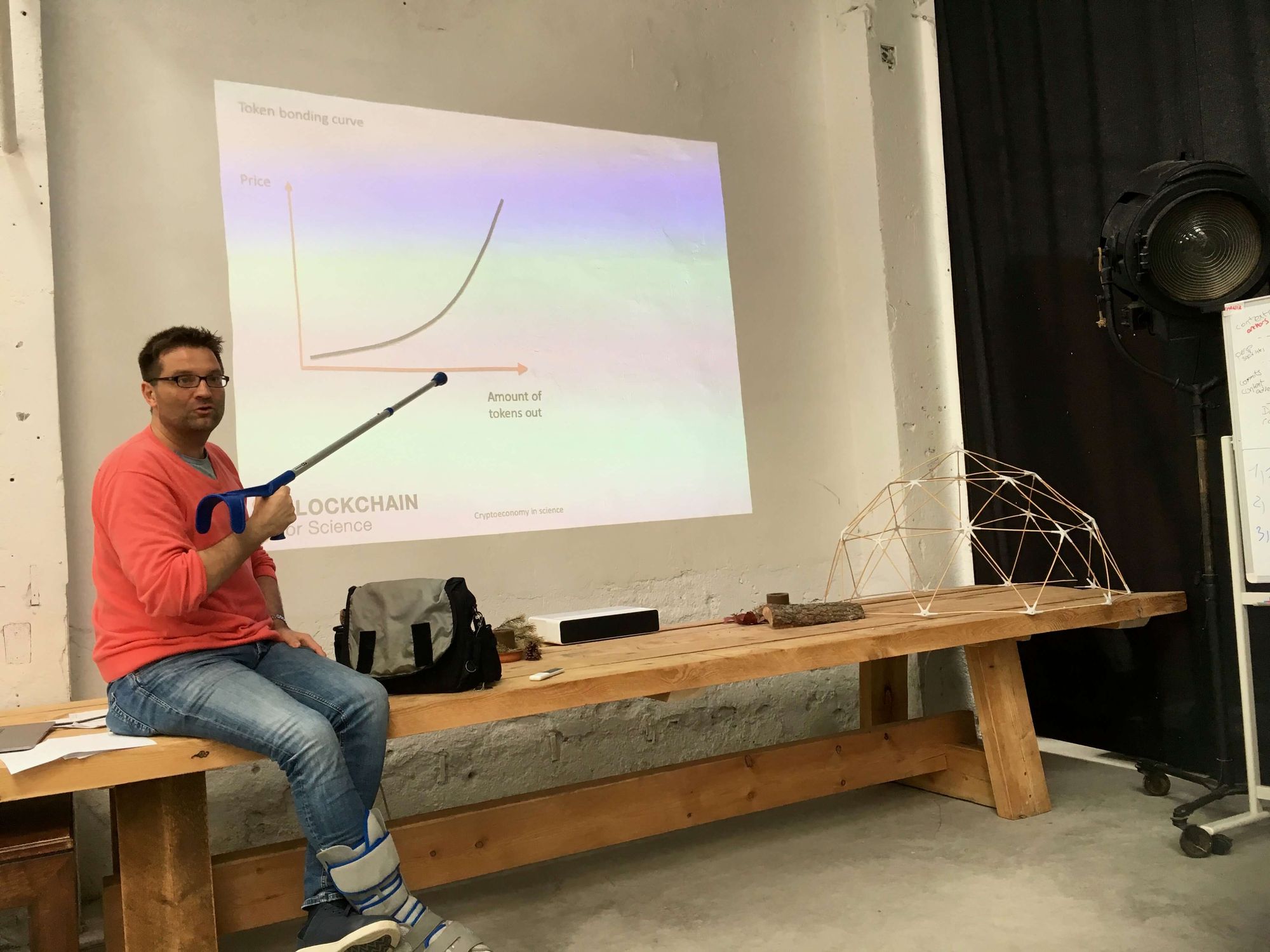

The second day focussed on hands-on applications in scientific funding for collaborative research. Sönke Bartling introduced the dire state of the current scientific funding models, how complicated grant-writing procedures actually hamper scientific progress and waste massive amounts of a researchers resources (by one assessment the cost of writing scientific grant applications is equal to or exceeds even the amount of funds awarded.) On this topic we also received remote support through the contributions of by Prof. Stefan Krauss, the Institute of Basic Medical Sciences at the University of Oslo, and Lambert Heller Head of the Open Science Lab at the Leibniz Information Centre for Science and Technology.

An exciting concept was introduced by Sönke Bartling, who suggested to create “new deals on data.” These could provide new ways of data handling, privacy, and data value, while making data available that is currently unused. Data handling is distributed away from one single entity to many; and smart contracts can be used to provably constrain the access to the data (e.g. respecting privacy). Token economies may create novel incentive structures that improve the sharing of data and quality assurance. Think of it as bringing the computation to the data instead of leaking the data to data processors.

Sönke Bartling on "New deals on data"

David Bovill, inventor, amongst other amazing projects, of the concept behind-blockchain backed Plantoids and experimenter with Liquid Democracy, currently also engaged with DEIPworld outlined his vision of a “governance journal,” which would be backed by a three-tiered system focusing initially around public, open deliberation, followed by a transparent, evidence-driven, and decentralized peer-reviewed consensus process that should be embedded in any decision-making process.

Phoebe Tickell and David Bovill debating on new means of governance for scientific organizations.

The question of how we could govern science differently is a topic that has been under-explored and researched. Thinking about how the public may be able to bring a say into deciding what tax-funded research focuses on is one such capacity that could be introduced using participatory or liquid democracy voting systems.

Offering researchers platforms for deliberation and decision making together is also hugely exciting - even existing off-chain platforms such as Loomio. So much research goes on in silos and making better use of tools and platforms that encourage communication, collective governance, and collective intelligence to emerge could completely change how global-scale research is coordinated and executed. Labs could potentially collaborate together in multi-stakeholder experimentation and discovery. The potential exponential impact on research and scope could be huge. Phoebe Tickell, previously researcher in synthetic biology at Imperial College London and now a researcher and consultant in distributed governance, contributed viewpoints from existing distributed governance experiments such as Enspiral and community members of the DGOV community.

Jens Ducrée, Professor of Microsystems in the School of Physical Sciences at Dublin City University (DCU) advocated to begin with the implementation of experiments right away. Tapping into the to date underused potential of many laboratories, including also bio-hacklabs for collaborative projects at the interface between engineering and biomedical sensing devices was explored. In fact resources both from academic labs as well as “hack-labs” could be brought together using DAO-like governance mechanisms. Microfabrication, bioengineering, and lab automatization and miniaturization that already today apply mature digital planning and simulation tools could be in fact a powerful test bed to explore how a new generation of scientists could use peer-to-peer collaboration, funding among and acknowledge idea givers and even citizens in the maker space for the diversity and value of their contributions to science.

Prof. Jens Ducrée of FPC@DCU, Dublin City University, Ireland.

The Web in fact was born as a means to improve the communication and coordination among scientists. In the wake of the current “social media” crisis, the collective time wasted daily globally this seems hard to imagine. With small groups such as the one that just met in Barcelona that are tirelessly working towards new, privacy-preserving and valuable means of creating, collecting, and sharing knowledge there is hope we may get this back on track. The workshop ended with several attendees arranging for follow ups and hands on trials of DAOs and new means of scientific communication. AKASHA is excited to be part of these early experiments and will be reporting on these next steps in future blog posts.

Link to presentations hosted on ZENODO.

Goodbye Barcelona!